AI changed how products grow and find their own place in their target market. Learn why AI makes PMF harder to measure and what actually matters today.

For a long time, product–market fit was something founders worked toward, something they grew into. It came gradually, with subtle signals at first, as you built, tested, and improved. Sometimes, it took months, sometimes longer.

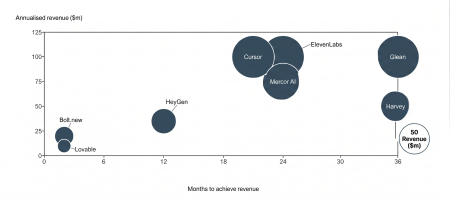

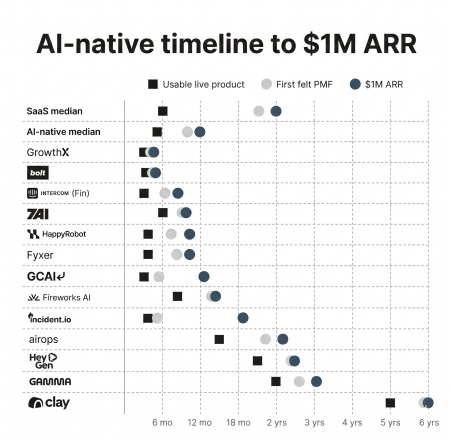

For many companies, especially SaaS, that’s still the traditional path to follow. However, in the AI era, the shape of the PMF is different. Rather than building slowly over time, it often appears as an inflection point. Either the product sees immediate adoption and strong usage, or it doesn’t gain traction. There is less room in between. As a result, companies can reach $1M ARR in months and scale to $10M within a year when demand is obvious.

Source: Product Market Fit in the AI Age

But this comes with a new challenge. For an artificial intelligence company, early signals are easy to generate but hard to trust. Products can show good metrics for growing usage, active engagement, and rising signups without becoming embedded in users’ workflows. So, the core issue is now that usage is not dependency.

The Illusion: Why It’s Easier Than Ever to Think You Have PMF

The way technology has advanced, especially artificial intelligence, has changed the economics of building products. Development cycles are shorter. Costs are lower. Teams can launch faster and iterate more frequently. It increases the number of products in the market and, with it, the number of early traction signals.

We’ve seen founders struggle with this, and Andrei Manolache, our portfolio member and founder of DesignVerse, confirms as much.

On the other hand, AI has also changed how product quality is perceived in the earliest stages. It is now possible to launch a prototype that appears complete from day one. A founder can release an AI tool that immediately produces impressive outputs, attracting early users who sign up, test it, and share it with others. Yet none of that necessarily means the product solves a recurring problem or becomes embedded in a workflow.

This is where the first layer of false positives appears.

Signals that once pointed to genuine demand now appear earlier and more frequently, but two distinctions are often overlooked: usage is not dependency, and a strong demo is not the same as real value.

Distribution amplifies the effect. Social platforms, communities, and launch ecosystems can take a small amount of traction and make it look significant. A product highly shared on Twitter or successfully launched on Product Hunt can generate thousands of visits, hundreds of signups, and strong short-term engagement. These metrics can resemble product-market fit, but they are often driven by curiosity rather than dependence.

Part of this confusion comes from how inefficient product creation used to be, especially across design and engineering, says Andrei Manolache.

“Huge amounts of time and resources were wasted maintaining two separate systems, keeping them updated and correlated. On the product side, companies needed designers to maintain the components from the design system into tools like Figma, which required endless, repetitive daily work.

On the development side, companies needed to find hacky ways to translate the said Figma components into reusable code artifacts, which often times required constant rework and refinements.”

AI has compressed much of that process. Products can now be built faster, launched sooner, and polished earlier in their lifecycle. As these distinctions blur, identifying true product-market fit becomes more difficult. At the same time, expectations around quality have begun to diverge by user segment.

How users define “good enough” increasingly depends on who they are, according to Andrei Manolache, founder of DesignVerse.

“It is a difficult question to answer. For regular users or consumers, they have already gotten pretty used to the “slop” outputs, meaning that all products look extremely similar, and the functionalities are very limited.

For enterprise users, it’s a totally different story. We built DesignVerse specifically to assist companies to deliver large scale software that cannot fail, therefore the quality bar is much higher than in consumer apps.”

Yet AI is not only creating noise around early traction. It is also unlocking categories that were previously uneconomic or impossible to operate at scale. That is the view of Matei Trebien, founder and CEO of VoicePatrol.

“Easier, for both VoicePatrol and our AI-focused competitors. When you look at the only market alternative, it’s hiring tens or hundreds of thousands of human moderators to listen non-stop to 100% of the audio traffic.

You have to perfectly organize shifts so not a single second of audio slips through, and those humans have to be 100% focused and present the entire time. AI simply scales this impossible human task.“

The New Definition: What PMF Actually Means in AI

The core idea of product-market fit has not changed. Companies still need to build products people genuinely want. What has changed is how demand translates into something durable. In the AI era, the unit of analysis is no longer just the product itself.

In earlier software models, PMF could often be judged at the product level. Adoption, engagement, and retention were commonly treated as reliable proxies for value. If users kept returning, the assumption was that the product had found its place.

That framework is becoming less complete in AI. Products are increasingly judged not only by whether they attract users, but by how effectively they reduce effort, improve outcomes, and integrate into existing workflows. In many cases, the real value lies in what the system enables rather than in the interface alone.

This is also visible in how users interact with the data itself, says Nikola Komes, founder of InsiderCX.

To understand PMF in AI, it should be analyzed across three layers: the problem being solved, the operational impact created, and the system context in which the product runs.

Three-layer framework for modern PMF

Product-market fit in AI needs to be evaluated across three layers. Looking at only one can be misleading. A product may attract users without improving economics, or automate tasks without becoming embedded in workflows. To understand whether demand is real and durable, each layer needs to be examined in sequence.

- Pain — Is the problem critical and frequent?

- Proof — Does the product change real metrics?

- System — Is it embedded in existing workflows?

- Pain (still the foundation)

Not all problems carry the same weight. Some are tightly linked to core business processes. They appear frequently, have measurable cost implications, and are directly tied to performance metrics. Others are more peripheral, occurring intermittently with limited impact on outcomes.

Product-market fit tends to emerge first in the former category. Functions such as revenue generation, customer support, or software development involve continuous workflows with clear performance constraints. Inefficiencies in these areas are observable and costly. By contrast, problems that arise less frequently or matter less to core metrics tend to produce weaker adoption patterns, even when the product itself is well designed.

This helps explain why AI products focused on high-value, recurring pain points can scale quickly to sustainable usage. PMF often begins where the problem is already important enough to demand a solution, rather than where technology is simply better.

- Proof (what fundamentally changed)

In earlier software models, proof could often be validated through efficiency gains at the margin. Even small reductions in cost or modest productivity improvements could justify adoption if the product was broadly useful and easy to deploy. Validation now depends on something more concrete.

In AI, expectations are different. Products increasingly need to participate in the work itself, not just support it. This changes how proof is measured. Instead of asking whether the product is helpful, companies ask whether it changes key operational metrics tied to revenue, speed, or labor efficiency.

Instead of focusing on engagement, teams need to look at:

- Reduction in process time (for example, faster ticket resolution or shorter sales cycles)

- Increase in output per unit of labor (for example, more tickets handled per agent or more code produced per engineer)

- Decrease in manual intervention (for example, fewer handoffs, escalations, or required reviews)

In customer support, for instance, the relevant signal is not how often agents use AI suggestions, but whether the system reduces resolution times or increases automation rates for incoming tickets.

In sales, the question is not whether AI can generate messages, but whether it can replace parts of prospecting and outreach in a way that improves pipeline creation. This is where the concept of automation rate becomes visible: the share of work completed directly by the system versus by humans.

At this stage, proof means observable changes in throughput, cost structure, or decision flow. Without those changes, a product may be used, but not relied on.

- System (what’s entirely new)

The defining characteristic of product-market fit in AI is where the product sits within the broader system. In earlier software models, products could remain relatively modular. They delivered value independently, without requiring deep integration into surrounding tools, data sources, or operating processes.

That is less true in AI. Value is now tightly linked to context. To perform effectively, the product often needs access to proprietary data, alignment with existing workflows, and a clear role within ongoing operations. Without those elements, performance becomes inconsistent, outputs lose relevance, and trust is harder to build.

PMF now moves from a product-level concept to a system-level one. The question is no longer only whether the product is useful on its own, but whether it functions effectively inside the environment where work already happens.

Consider finance. An AI tool may need access to transaction data, reporting systems, approval chains, and internal controls. Its success depends not only on model capability, but on how well it operates within that ecosystem.

The same logic applies across functions. In sales, the product may need CRM data, pipeline workflows, and outreach systems. In marketing, it may require campaign data and content operations. In customer support, it may depend on ticketing systems, knowledge bases, and escalation processes. In engineering, it may need code repositories, deployment tools, and review workflows.

The Mechanism: How AI Companies Actually Reach PMF Today

The process of reaching product–market fit has not just changed in structure. It has changed in speed, according to Nikola Komes, founder of InsiderCX.

“It made it both easier and harder. If we are talking about a super-early stage, it’s now incomparably easier to build an MVP and deliver first value to market and test your assumptions.

However, when trying to scale, I think pretty much everyone in B2B SaaS is starting to see that the buyers are super-saturated with AI-powered ads, outbound mails, calls etc. So in general it’s easier to build, but it’s also much harder to reach the target customers.”

If usage alone is no longer a sufficient signal, then product-market fit must be measured differently. That also influences how companies actually achieve it.

In traditional SaaS, getting to PMF and then to $1M ARR was a long, gradual process. On average, it took about two years to feel the early signs of fit, and often longer to translate them into meaningful revenue. Teams would iterate carefully with small groups of users, improving the product step by step. Progress was steady, but slow.

In AI, that timeline is compressed. Recent data show that AI-native companies reach $1M in ARR in roughly 12 months on average, with some doing so much faster. In certain cases, products have generated millions in revenue within weeks of launch, even while still evolving.

Source: How the top AI-native startups launch and grow

But speed alone does not guarantee expansion. In many cases, the first bottlenecks appear not in the product, but in how growth is operationalized, says Matei Trebien, founder and CEO of VoicePatrol.

“It’s ironic, but adoption actually slowed down right after we achieved our first big business goal: dominating a small, non-obvious market – VR.

We achieved this in just a few months, and honestly, the whole team was so zeroed in on capturing this initial market asap that we forgot to prep our bizdev channels in time to expand beyond VR, despite the product being ready for it. That’s exactly the bottleneck we’re clearing out now.“

1. Start with the metric, not the feature

In AI, features are easy to build. But that also makes it easy to build functionality that looks useful but doesn’t change meaningful impact. As a result, teams that start from features often accumulate complexity without improving outcomes. The more effective approach is to define a measurable objective before building anything.

That objective usually ties back to how work is actually performed:

- reducing the time required to complete a task

- increasing the volume of work handled per person

- improving conversion from usage to paid adoption

- increasing retention or repeat usage

These metrics anchor development in outcomes rather than capabilities. Instead of building a “better assistant,” for example, a company might focus on reducing ticket resolution time in customer support. The feature set then becomes a series of experiments designed to improve that specific metric.

This framing changes how decisions are made. Features are no longer goals. They are hypotheses. If a feature does not move the metric, it is removed or replaced. This prevents the accumulation of “impressive but unused” functionality and keeps the product aligned with real impact.

The move from features to outcomes often requires redefining what the product actually delivers. In many cases, it’s not the data itself that creates value, but what users can do with it, says Nikola Komes, founder of InsiderCX.

“We wanted InsiderCX to become a tool for better decision-making from day one. One way to think about it is insurance from bad decisions. However, that is easier said than done because the data must be presented in a way that users can understand it without statistical training or data savviness.

We have gradually moved away from purely presenting the feedback and the results to giving concrete and specific recommendations on next steps and the desired course of action by using AI.”

The same principle applies in infrastructure-heavy AI products, where usefulness alone is not enough. Product-market fit often depends on crossing a specific threshold of accuracy, reliability, and operational efficiency, says Matei Trebien, founder and CEO of VoicePatrol.

“I’ve been building machine learning models since before the original Transformer architecture paper dropped, and I zeroed in on Audio ML before transformer-based applications like ChatGPT blew up.

If we hadn’t adapted that specific architecture directly for our niche use case, we never could have achieved State-of-the-Art (SOTA) Recall, Precision (and thus F1), and Specificity – especially for the highly context-heavy events.”

In other words, modern PMF in AI is often reached not when a feature exists, but when performance becomes strong enough to deliver measurable results consistently.

2. High-velocity iteration loops

The next advantage comes from how quickly a team can iterate. In AI, the cost of testing ideas is low. Teams can deploy changes frequently and observe results in short cycles. This changes the competitive advantage from long-term planning to rapid learning. Effective teams operate in loops like this:

test → measure → decide → iterate

In practice, early signals of value often appear quickly, but they only matter if teams can translate them into sustained adoption. That usually depends on how fast feedback is captured, interpreted, and turned into product improvements.

Nikola Komes, founder of InsiderCX, says this dynamic has been visible in customer-facing environments where operational gains can be measured directly.

“The value first starts showing in a boost of online reputation due to the high volume of satisfied patients, which we redirect to online platforms like Google or Trustpilot. However, this is a boost that plateaus quickly. The real value lies in reducing patient churn by proactively contacting patients that had an issue. Typically, they reduce their SLAa from weeks (from identifying the issue to contacting the patients) to hours within the first months of using us.

The next big area is improving internal processes – by having a deep dive into each area of the patient journey, they are ready to start working on their processes.”

The lesson is that visible engagement is rarely enough. What matters is whether early usage evolves into measurable operational gains that deepen over time. These cycles often occur weekly or more frequently. The goal is not to get things right the first time, but to converge on what works through repeated exposure to real usage.

It also requires a different level of prioritization from founders. Iteration is fast, so teams need to be selective about what they continue to invest in. Features that do not show clear impact are removed quickly. Resources are concentrated on areas where measurable improvements appear.

Over time, this creates a compounding effect. Faster cycles lead to more data. More data leads to better decisions. Better decisions improve the product. The product, in turn, generates stronger signals, which further accelerate learning.

As teams iterate in real environments, you start seeing second-order effects and outcomes that were not part of the original hypothesis, shares Matei Trebien, founder and CEO of VoicePatrol.

“We always expected that the biggest, most toxic offenders would be the first to complain to game admins about mute/ban appeals. That’s exactly why we made sure the platform hands admins all the audio evidence they need on a silver platter.

The real surprise, however, was seeing how much overall voice chat engagement spikes across the board once those super-toxic outliers are eliminated. A 10% jump in 5 weeks is huge. The MVPs are just having more fun without the griefing.

The absolute coolest moment came when a studio built a custom in-game UI alert system tied to VoicePatrol. Players are placed in rooms with a handful of other random players. When someone was being severely toxic, a UI notification would pop up for everyone else in that room saying, “Player X was sniped by VoicePatrol for being a racist douchebag” – and the remaining players would literally cheer.”

This is the strategic value of high-velocity iteration. Fast cycles do not merely improve products faster; they uncover behaviors, incentives, and sources of value that static planning often overlooks.

3. Deployment over selling

In traditional models, products were often sold before they were fully experienced. Marketing, demos, and sales conversations played a central role in driving adoption.

In AI, this approach is less effective. Because the value of AI products depends on how they perform in real workflows, they are best understood through direct use. Users need to see outcomes, not promises.

It changes the go-to-market model. Instead of leading with explanations, teams focus on exposing users to the product in real-world contexts. This often takes the form of hands-on trials, live implementations, or embedding the product into a small part of a workflow. For example, rather than pitching an AI support system, a company might deploy it on a subset of tickets and measure its performance. Adoption then follows from demonstrated results.

Users do not need to be convinced in advance; they observe the impact firsthand and make decisions based on that experience. As a result, growth is tied more closely to delivered outcomes than to messaging. This is especially visible in categories where trust is a barrier. In these markets, value is not something that can be explained up front. It has to be observed, and often clearly seen in action.

Matei Trebien, founder and CEO of VoicePatrol, says this has shaped adoption in voice moderation:

“For studios that have never rolled out voice moderation at scale, there’s a totally valid fear of spying on their players and censoring harmless trash talk.

That resistance vanishes the second they sign up for our free trial and deploy VoicePatrol in monitoring mode (zero automated actions, just listening). On Day 1, they get immediate, visual value. Their dashboard gets flooded live with thousands of truly severe toxic events. We aren’t flagging friendly banter; we’re catching everything from easy-to-spot racism to context-convoluted child grooming. Plus, they see that we anonymize the data, so we can’t tell who is who on our end.

On Day 1, they get immediate, visual value. Their dashboard gets flooded live with thousands of truly severe toxic events. We aren’t flagging friendly banter; we’re catching everything from easy-to-spot racism to context-convoluted child grooming. Plus, they see that we anonymize the data, so we can’t tell who is who on our end.

Once they flip the switch on automated actions, they see a powerful causal chain within five weeks: severe toxicity drops by 55% -> voice chat engagement increases by 10% across the entire player base (turns out, it’s way more fun to play when the ultra-toxic offenders are gone) -> ARPPU increases by 5%.

For studios already using a legacy moderation provider, the initial resistance comes from our size and the fact that we’ve only been fully in the market for a year. They understandably doubt that we can be a fraction of the cost of our competitors while maintaining a higher standard of precision and speed. Again, the free trial kills those concerns.

They see the exact same Day 1 visual value and the exact same 5-week impact on their core business metrics. It ultimately ends up being a no-brainer financial deal: they can finally afford 100% audio coverage and still see a 2-3X decrease in their monthly moderation bill.”

4. Forward-deployed teams

The final component is organizational. In AI, product development cannot be fully separated from the environment where the product is used. The variability of real-world workflows, data quality, and edge cases makes it difficult to design solutions in isolation.

This is pushing companies toward a more integrated operating model, one in which product and engineering teams remain close to the customer context. They observe how the system behaves, where it breaks, and how users adapt around it.

The result is faster feedback loops and better product judgment. Teams gain a more accurate understanding of real workflows rather than relying only on reported needs. They can identify constraints that never appear in early-stage testing and adjust the product accordingly.

Andrei Manolache, founder of DesignVerse, argues that this proximity is reshaping how software teams themselves are organized.

“We are removing the feedback loop between the design and development teams.

We think that software development will be fundamentally transformed into smaller dedicated teams that will work in a Design Sprint setting to launch individual features, which will converge into the core software product offering.

This model matters because many of the most valuable insights emerge only after deployment. Real usage often reveals behaviors that were never part of the original product hypothesis. Andrei Manolache continues to point out an example:

One surprise and an unexpected use case was the staff bonusing.

In the beginning, we were really focused on surfacing problems and areas for improvement. Then, we heard that one client is using the product for selecting doctors and staff that will receive a quarterly bonus.”

That kind of discovery is difficult to generate from planning sessions alone. It tends to come from teams that stay close to customers long enough to observe how products are actually absorbed into day-to-day operations.

Future Thoughts on Product-Market Fit in Practice

AI may continue to influence how products and companies might grow and scale, but one thing will always stay unchanged. You still need to build something people want.

A product demonstrates fit when it drives operational impact, reducing time, increasing output, or replacing manual effort, and when it becomes embedded in existing workflows. In this context, PMF is not established by activity but by the extent to which the product’s absence would disrupt the system in which it operates.

At the same time, product-market fit is also influenced by forces outside the product itself. Tighter global budgets, greater scrutiny on costs, and shorter planning cycles have raised the threshold for adoption. Buyers are less willing to pay for tools that are merely interesting or marginally useful.

Meanwhile, advances in AI have sharply reduced the cost of building and launching new products. Supply has increased, differentiation has compressed, and you can have more competitors faster than ever before. Distribution has also become more efficient, allowing products to gain traction faster while intensifying the speed of competitive response.

In this environment, PMF is established when a product creates measurable, system-level effects that persist under constraint. That may mean lowering costs, increasing throughput, improving reliability, or replacing manual processes in a way that continues to justify budget, attention, and internal adoption.

Looking ahead, product-market fit is likely to be determined less by the speed of initial adoption and more by the durability of impact amid ongoing economic and technological pressures. Early traction may still matter, but lasting fit will belong to products that remain valuable when budgets tighten, alternatives multiply, and expectations continue to rise.